AI Transparency and Accountability: The Foundation of Trustworthy AI Systems

As artificial intelligence (AI) systems continue to integrate into various domains, concerns about their impact on individual and societal wellbeing have grown. A major factor contributing to these concerns is the lack of transparency and accountability in AI decision-making processes. In this article, we will explore the importance of AI transparency and accountability, and how they can be achieved in AI systems.

The Importance of AI Transparency and Accountability

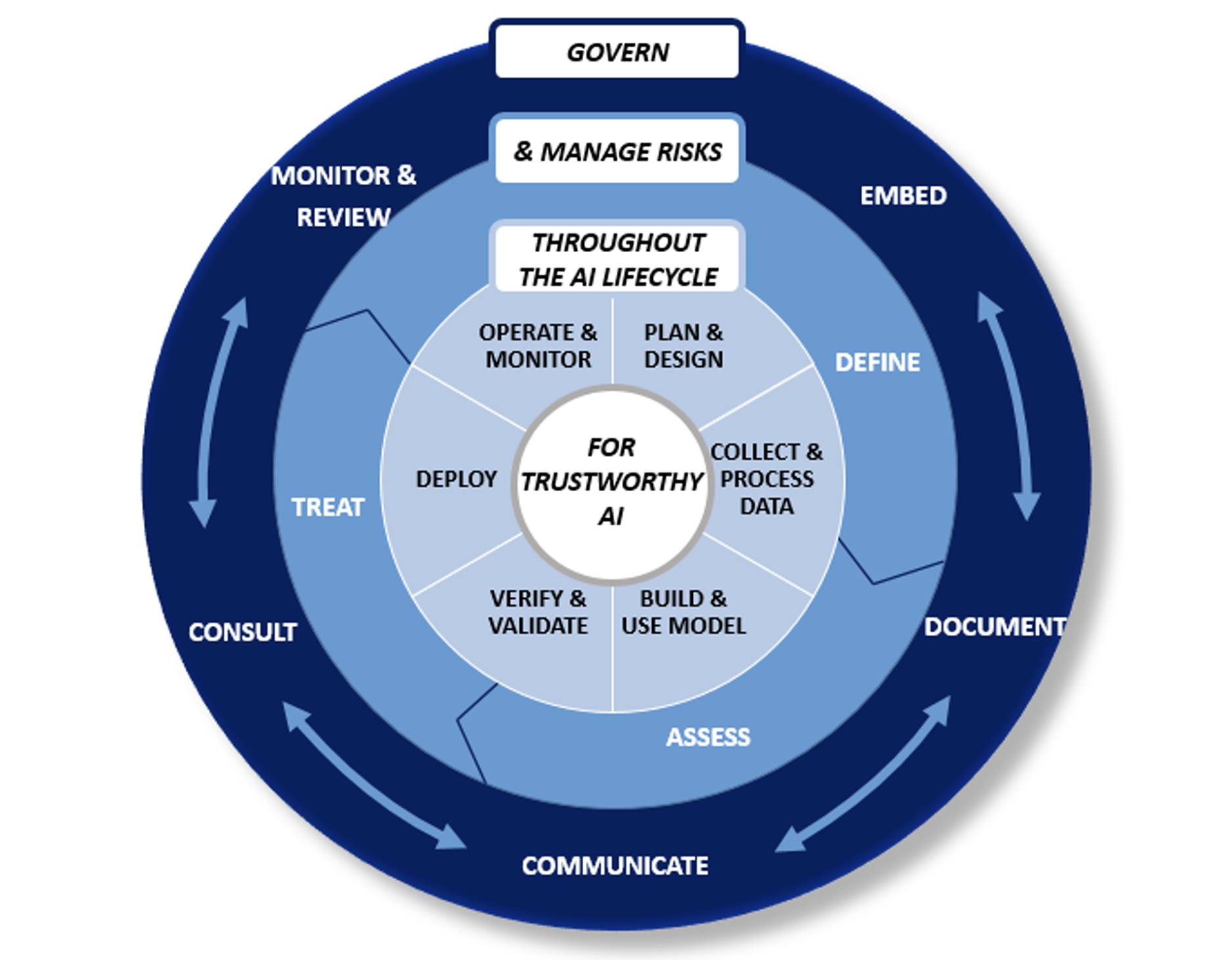

AI transparency refers to the practice of providing clarity and openness about how AI systems are developed, how they make decisions, and how they process data. This is essential for building trust in AI systems and ensuring that they are aligned with ethical and societal values. AI accountability, on the other hand, refers to the responsibility of AI systems to explain their decisions and actions, and to be held accountable for their consequences.

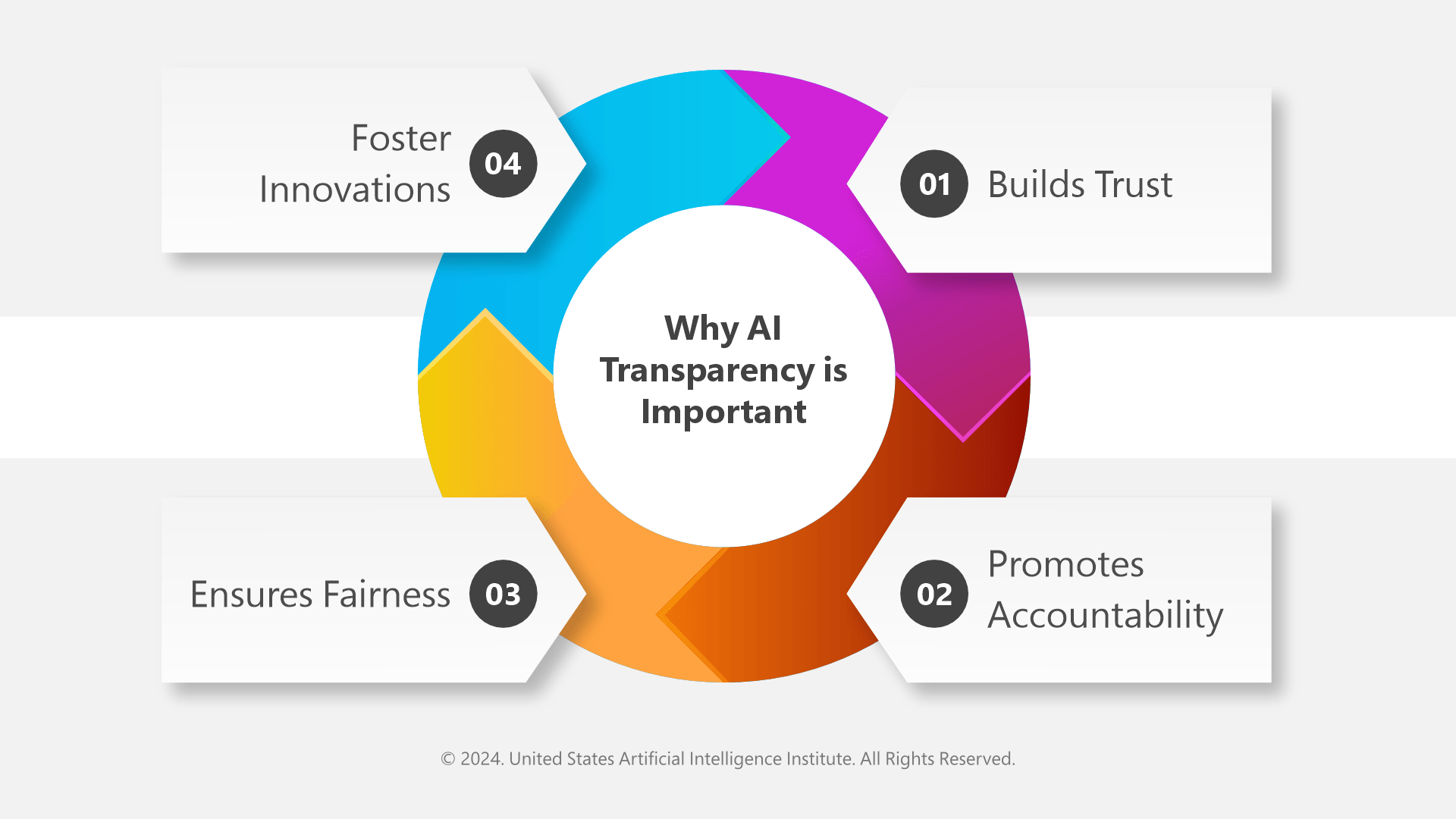

Benefits of AI Transparency and Accountability

- Improved trust: By providing transparency into AI decision-making processes, organizations can build trust with their stakeholders, including customers, employees, and the general public.

- Reduced risk: Transparent AI systems are less likely to perpetuate bias and error, which can lead to costly mistakes and reputational damage.

- Enhanced accountability: By holding AI systems accountable for their decisions and actions, organizations can ensure that they are responsible for their consequences and can be held liable for any harm caused.

- Increased adoption: As AI transparency and accountability become more widespread, organizations will be more likely to adopt AI systems, knowing that they can trust the technology and its outcomes.

Challenges to Achieving AI Transparency and Accountability

While the benefits of AI transparency and accountability are clear, achieving them can be challenging. Some of the key challenges include:

- Complexity: AI systems are often complex and difficult to understand, making it challenging to provide transparency into their decision-making processes.

- Explainability: AI systems are often black boxes, making it difficult to explain their decisions and actions.

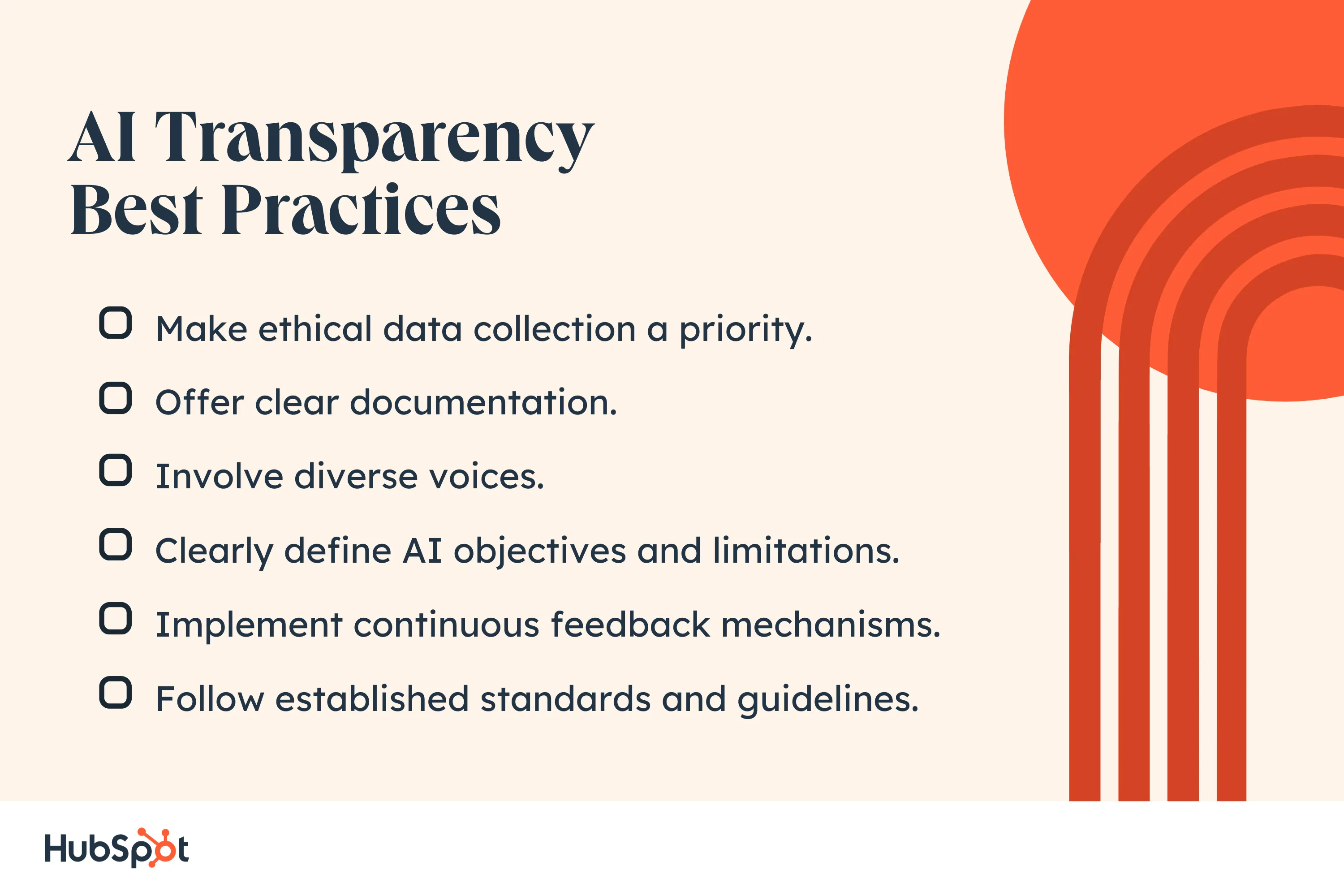

- Lack of standards: There is currently a lack of standards and guidelines for AI transparency and accountability, making it challenging for organizations to know what is expected of them.

Metrics for Measuring AI Transparency and Accountability

Measuring AI transparency and accountability requires a range of metrics, including:

- Explainability: This refers to the ability of an AI system to explain its decisions and actions.

- Transparency: This refers to the ability of an AI system to provide clear and open information about its decision-making processes.

- Accountability: This refers to the responsibility of an AI system to explain its decisions and actions, and to be held accountable for its consequences.

- Human oversight: This refers to the ability of human stakeholders to review and correct AI decisions and actions.

Regulatory Demands for AI Transparency and Accountability

Regulatory demands for AI transparency and accountability are increasing, with many governments and regulatory bodies calling for greater transparency and accountability in AI systems. Some of the key regulatory demands include:

- Explainability: Regulators are demanding that AI systems be able to explain their decisions and actions.

- Transparency: Regulators are demanding that AI systems provide clear and open information about their decision-making processes.

- Accountability: Regulators are demanding that AI systems be held accountable for their consequences.

Conclusion

Ai transparency and accountability are essential for building trust in AI systems and ensuring that they are aligned with ethical and societal values. While achieving AI transparency and accountability can be challenging, it is a critical step in ensuring that AI systems are safe and responsible. By measuring AI transparency and accountability, regulatory bodies can ensure that AI systems meet the necessary standards and guidelines.